WorkoutEvaluator: An iOS App That Provides Personalized Exercise Feedback Through User-provided Data

A well-researched student project. Like very.

Introduction

Background

This Senior Comprehensive Project aims to address the problem within the fitness domain. Specifically, the lack of accessible, personalized feedback in weightlifting training. Millions of people visit gyms each day to improve their health and performance, yet many struggle to determine whether they are training effectively. Questions such as “Am I using the right form?”, “How much weight should I lift?”, “Is my progress on track?”, often goes unanswered without a personal trainer or advanced tracking. To bridge this gap, my iOS application provides real-time, user-input data-driven feedback designed to guide.

The gym lifestyle has been actively growing for over twenty years. In 2000, around 32.8 million people had active gym memberships in the United States. Since then, that number has more than doubled to approximately 77 million in 2024, with predictions estimating 85–90 million memberships by 2030 ([Kerxhaliu?]). As the number of gymgoers continues to rise, so does the portion of this population seeking feedback, whether through phone applications, personal trainers, or wearables. Currently, about 29% of gymgoers work with personal trainers ([Kerxhaliu?]). However, the average hourly rate for a professional personal trainer ranges from $40 to $70 ([6]), which can significantly increase the cost of maintaining a fitness routine. What if there were a way to provide this guidance in a simple, free iOS app on your phone?

In this day and age, almost everyone carries their smartphone wherever they go. Whether it be to work, to dinner, to their children’s soccer games or to the gym. This constant presence presents a unique opportunity: Fitness guidance and feedback can be delivered directly to users’ hands at any time and from anywhere. By leveraging this accessibility, an app can provide personalized, real-time feedback that would complete something that hasn’t neccesarily been completed. In 2024, fitness apps were downloaded a total of 850 million times, yet only 345 million people actively used them ([2]). This gap highlights a clear opportunity for apps that not only attract users but also maintain engagement by delivering meaningful, easy-to-access insights. My iOS application aims to fill this gap, offering concise and actionable workout guidance that keeps users motivated and informed without requiring additional cost or equipment.

This raises the problem that I am driving to solve. Can feedback and analysis be provided to users through a simple and concise free mobile iOS application?

Overview of WorkoutTracker Application

This project includes a working iOS application with all core components implemented. This app is made from three main ideas: workout progress tracking, providing evaluation and feedback, and local data storage.

Workout progress tracking consists of two different areas. Primarily is the concept of logging your workout. In my app, the goal is to keep it simple, taking in user-inputted data that consists of muscle group, exercise, weight, and repetitions. Logging these four things in my developed app takes the user under fifteen seconds. This is beneficial as it provides the user with the ability to input data directly after a completed set, which doesn’t take up minutes. Once the user inputs the data, it then gets shot to two different areas: the workout log and visuals. Once the user inputs the data, they can readily view this within their history log of workouts, providing the user with the information they provided along with the date that it was completed. This is one way that the user will be able to visualize all the different exercises they have completed on any given day. From there, it activates the other visual part that is emphasized. The user is able to see the data for any specific exercise they have entered. This will aid the user in discovering trends and progress. You can easily view and recognize if you are bench pressing more weight than last time and how much it has progressed in the last month. The visuals are a large emphasis of this project, being able to provide the user with a simple and easy way to visualize if they are progressing or regressing goes a long way in the scope of fitness apps.

Providing evaluation, in my opinion, is the main emphasis of this project. With regards to the fitness app realm, no app that can provide concise and specific feedback to aid the user in their gym journey. In my opinion, too much feedback or too complicated feedback can be off-putting for users for many different reasons. Lots of apps provide the user with ten different graphs or ten different data points that stand out, but a lot of the time, the user may not understand what they are looking at and receiving. There has to be a way to provide the user feedback in two to four bullet points that can help them recognize what is good and what is bad, so that they don’t get stuck in a loop of creating bad habits. Well, essentially, this is what my app will have that will stand out. For example, if the user inputs exercises of a certain muscle group more than two to three times a week, my app will tell the user that they are overtraining that muscle group. The basic science is that every time you use a muscle group, you are essentially creating micro tears in this muscle that need time to regrow bigger and stronger. If it does not get that time to rest and regrow, then essentially, you will not see any progress. Things like this are not common for new gymgoers to know, so why not aid them in this journey? This evaluation will also be able to look at and evaluate trends. In the last month, am I progressing or regressing? Well, my app will let the user know this immediately after a data entry. Providing this feedback, in my opinion, is the missing puzzle piece in the fitness industry.

Finally reaching the point of local data storage is an important milestone in developing my app. Local storage allows the app to save information directly on the user’s device, which is essential for reliability, performance, and user experience. It does this by saving a JSON file on the user’s device. A JSON file (JavaScript Object Notation) is a format used to store and organize data in a structured way using key-value pairs. It’s easy for both humans to read and computers to process, making it ideal for app development. In my iOS app, a JSON file can store information such as workouts, exercises, or personal records locally. This helps keep the app’s data organized and easily accessible for future use or syncing. By storing data locally, users can still access and record their workouts even without an internet connection, making the app functional anywhere, including gyms or areas with poor service. It also ensures data persistence, meaning user progress and personal records are saved between sessions instead of being lost when the app closes. Additionally, local data storage enhances performance because retrieving information from the device is much faster than communicating with a remote server. Lastly, it supports better privacy and security, since sensitive fitness data remains on the user’s device, giving them greater control over their personal information. Security in 2025 is more important than ever, as data breaches and hacking attempts have become increasingly common. By prioritizing secure data handling within my app, users can feel confident that their personal information is protected. This added layer of security helps build trust and provides peace of mind while using the app.

The app was developed using Xcode and is currently tested directly on my iPhone through Developer Mode. My primary focus at the beginning of the process was learning a new programming language, Swift, while also understanding how to code, commit, and push changes to a GitHub repository within this new environment. Although Xcode took some time to get comfortable with, trial and error combined with YouTube tutorials—especially those focused on Swift basics, SwiftUI interface design, and Xcode project setup—helped me progress quickly. Comparing Swift to Python also gave me valuable insight into how each language handles certain functions and where Swift performs better for iOS app development.

I leveraged SwiftUI for the interface and used environment objects for data handling. The project was managed through GitHub, ensuring version control and consistent code saving. Testing on my personal iPhone required enabling Developer Mode, registering my device with Xcode, and configuring the necessary provisioning profiles to allow the app to run outside the simulator.

Xcode also provides the option of using “simulators” to run and test apps in development. A simulator is a software tool that mimics the behavior of an actual iPhone or iPad on your computer, allowing you to see how your app would look and function without needing a physical device. However, the downside is that the app may look or behave differently on a real iPhone. Therefore, testing on a physical device was essential to ensure accurate appearance and functionality.

Personal Motivation: “Why Me? Why This Project?”

This project aligns closely with my personal and academic interests in health technology and mobile app development. I have always loved video games, and as I got older, I became interested in understanding how they work behind the scenes. This project allowed me to finally explore that process and develop my own application from the ground up. I am also deeply passionate about health and wellness, so being able to bridge the gap between fitness and software development feels both meaningful and exciting. It represents a step toward creating technology that not only functions well but also has a real impact on people’s daily lives.

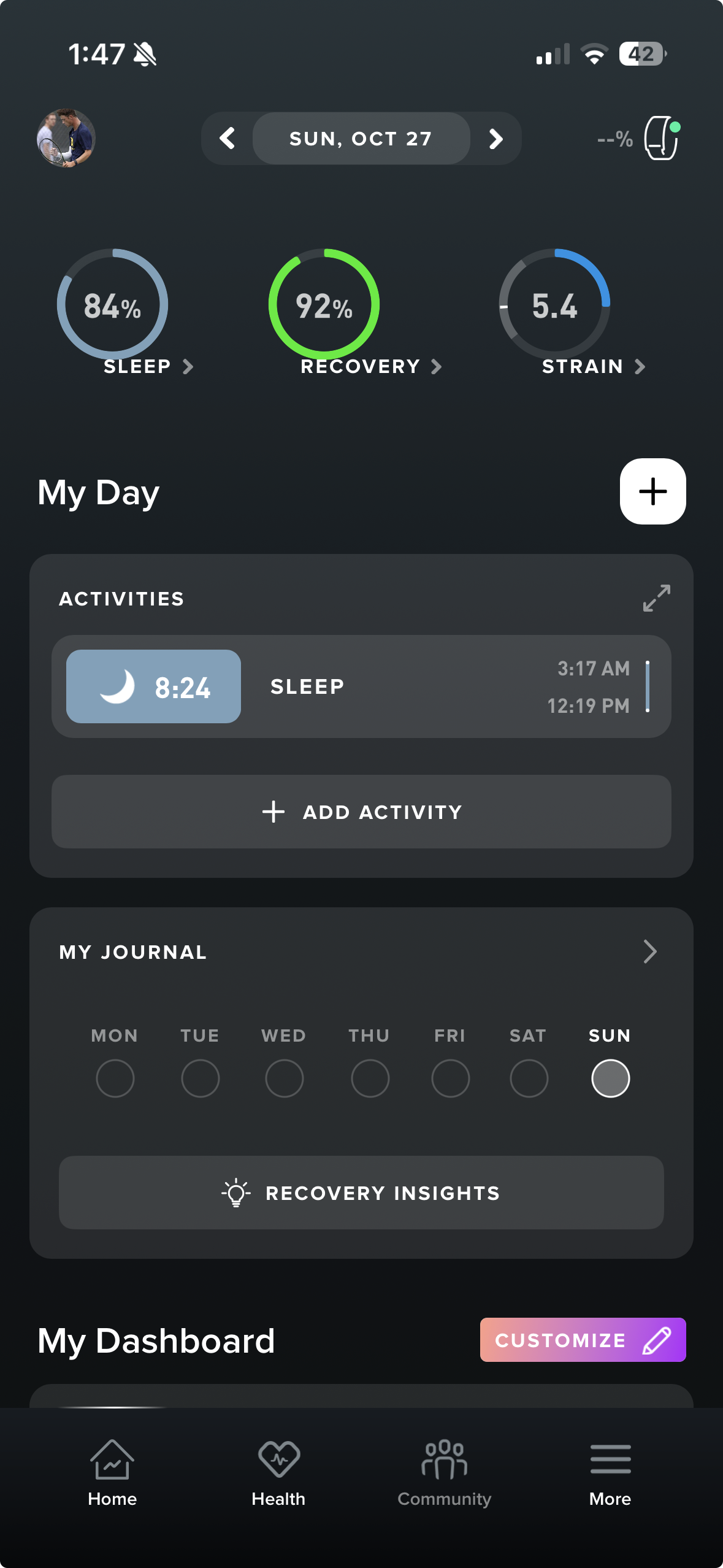

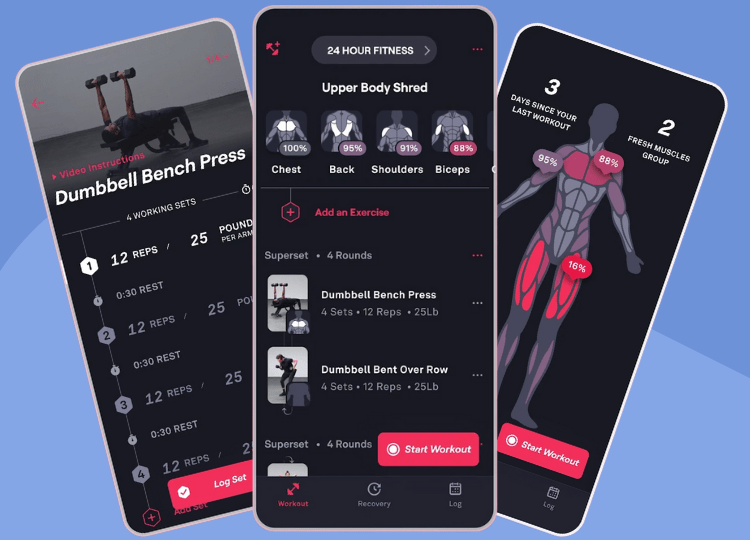

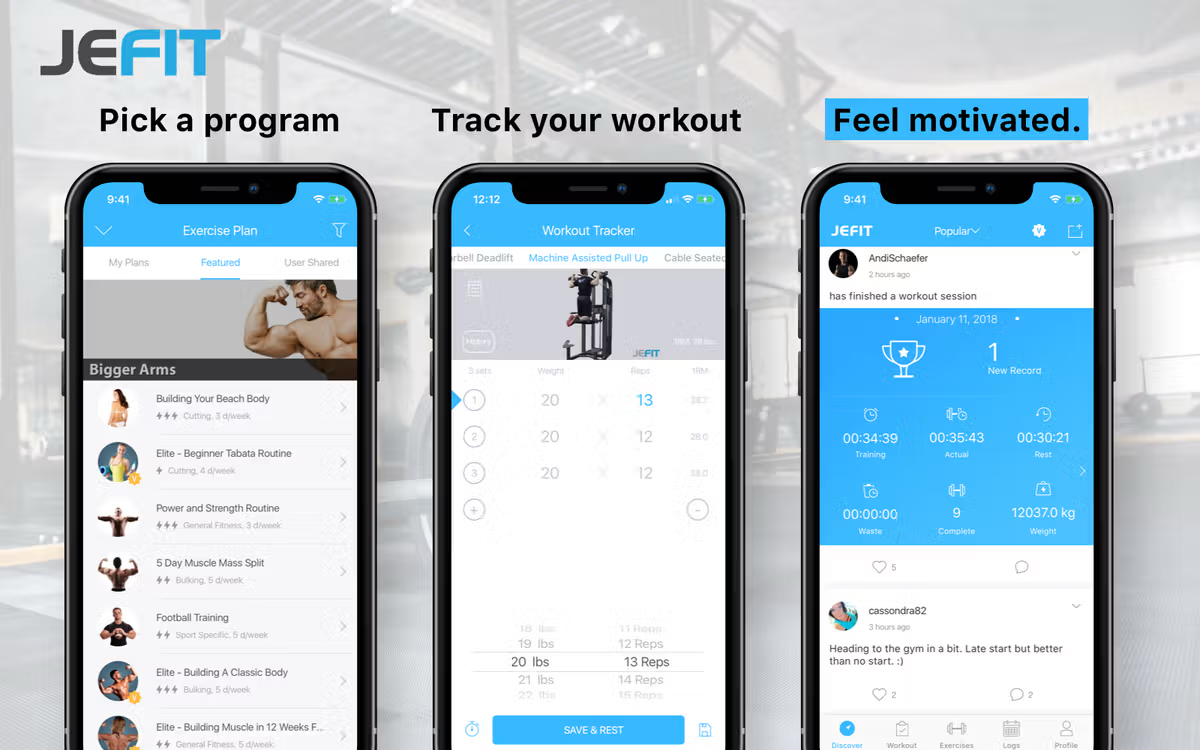

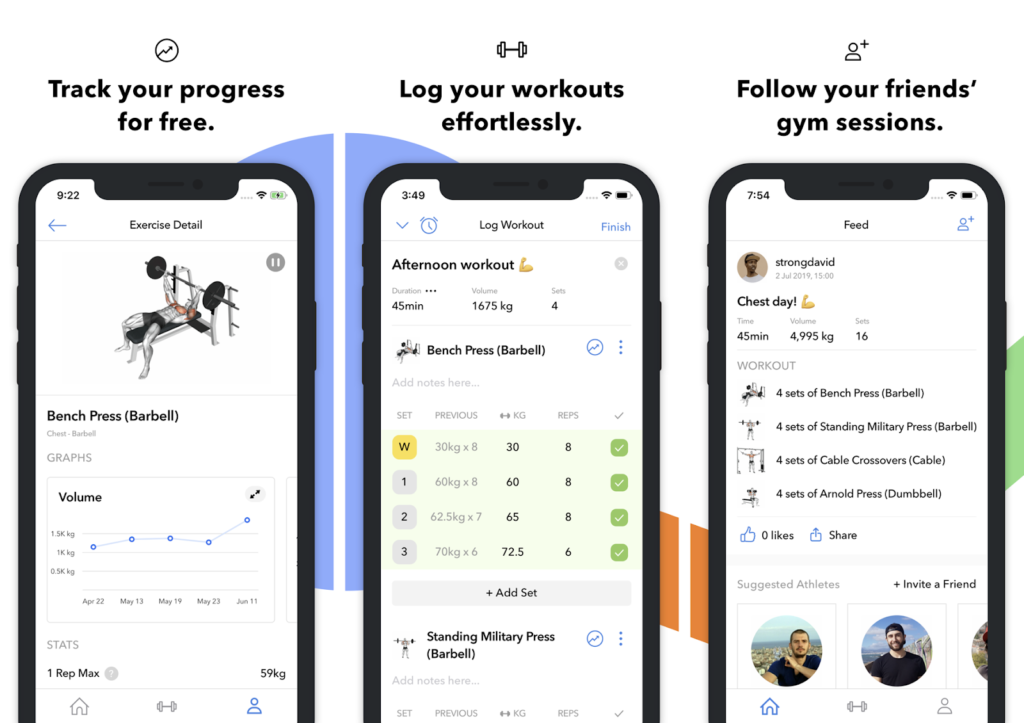

I have been an athlete my whole life, from my earliest years through college. I played soccer as soon as I could walk and run, continued with tennis up until my junior year of college, and have tried just about every sport imaginable. Sports have always been a central part of my life, keeping me active every day, whether through clubs or just playing with friends. This routine changed during my junior year of college when I left the Allegheny College Men’s Tennis Team due to a lingering shoulder injury. After playing sports my whole life, it felt strange not to be actively involved in a sport or hobby. To stay active, I decided to pursue a consistent gym lifestyle, going five to six days a week. Thankfully, I already had some experience in the gym through teams and clubs, but without that foundation, I would have had no idea where to start or how to define “success.” Through trial, learning from friends, and watching YouTube tutorials, I gradually gained knowledge and confidence in the gym. That experience sparked the idea: what if there was an app that could track my progress while providing personalized feedback to help me stay on track? I began experimenting with fitness apps like Whoop, Strong, Fitbod, and Hevy, but none provided exactly what I was looking for. That gap inspired me to develop my own app, tailored to my fitness goals and workflow. This process is especially unique because I am both the developer and a user of the app. Being able to experience the app firsthand allows me to constantly evaluate features from a real-world perspective, ensuring usability, simple design, and functionality. This dual role enables me to iterate quickly and create an application that is both practical and engaging for users like myself.

This process is especially unique as I am the developer for this app as well as someone who will be a user for this application. This unique experience allows me to have a visual of what I want to create at all times of development.

This background is why I feel particularly well-qualified for the developer role on this project. My academics at Allegheny College’s CIS department have also provided me with a strong foundation in computer science, equipping me with the technical knowledge and problem-solving skills needed to successfully design and implement a mobile application. Over the course of my studies, I have taken courses across nearly every area of computer science, including software engineering, web design, computer security, and programming languages. This breadth of exposure has allowed me to gain hands-on experience with multiple coding languages and frameworks, giving me the ability to approach the technical challenges of app development.

Two courses, in particular, have shaped my ability to contribute effectively to this project: Software Engineering and Web Design. Software Engineering provided me with experience in professional development workflows, including collaborative sprints, version control, and team-based problem solving. I learned how to write code that is not only functional but also readable and maintainable by other team members, which is a critical skill for any large-scale project. Understanding each function and code block in depth allows me to confidently iterate and expand the app while avoiding errors. Web design, meanwhile, highlighted the importance of user interface and user experience. Developing a clean and visually appealing interface is crucial for engaging users, particularly in a mobile app where ease of navigation and clarity are essential. The lessons from this course ensure that the app I am building is not only technically advanced but also user-friendly.

Beyond my academic preparation, my personal experiences have shaped my motivation and insight into this project. As a lifelong athlete, I have spent countless hours understanding training, fitness, and performance, which gave me firsthand knowledge of what users look for in a health and fitness app. Transitioning from organized sports to independent gym training revealed a gap in available tools for tracking and improving personal fitness progress. By combining this personal experience with my technical expertise, I can create an application that addresses real user needs.

Overall, my combination of technical training, practical experience, and personal motivation provides a strong foundation for this project. I am confident in my ability to develop an engaging and functional app that bridges the gap between software technology and health and wellness.

Project Motivation

This project is driven by the goal of combining key areas of computer science, data analysis, and mobile system integration to create a functional and efficient fitness technology solution. It provides practical experience in app development, data integration, and skills that are valued in the modern technology industry. Through the development of a mobile application that collects, processes, and evaluates the data, the project simulates the type of real-world problem-solving encountered in software engineering and health technology fields.

This project is also motivated by the integration and correlation of scientific and technical principles to advance personal fitness through mobile app development. It applies core STEM disciplines of data evaluation, computer science, and software engineering to design and implement an iOS application that automatically takes in user-inputted data and provides concise feedback.

Science and data represent one of the most direct connections between this project and STEM disciplines. The iOS application collects user-inputted fitness data and performs automated analysis to interpret and present this information meaningfully. The collected data is utilized in multiple ways, primarily for evaluating user performance and progress over time. By processing the data into both structured logs and dynamic visualizations, the app enables users to identify trends, track new personal records, and observe progression or regression across various metrics. Data analysis plays a key role for both the user and the backend system. Users interact with visual representations such as graphs to clearly view their performance history and identify patterns, while the backend continuously updates and organizes the dataset to support accurate and real-time insights.

Technology and engineering also play a heavy role in this project. The concept of building and developing a mobile app with real-world functionality demonstrates the practical application of engineering principles in software design and system development. The project involves using Apple’s development ecosystem, including Swift, Xcode to create an efficient mobile app. Through these tools, the app integrates user interfaces, local data storage, and data analysis. From an engineering standpoint the project requires consistent testing and debugging to ensure complete functionality and accuracy. These processes mirror the real-world design cycle. This involves designing solutions, implementing systems, and refining them based on testing. By applying these principles within this current project, it reinforces the integration of technology and engineering.

This project contributes to the broader field of public health and fitness engagement by allowing users to take an active role in understanding and improving their gym-self. Through user-inputted data-driven insights and personalized analytics, the iOS application encourages consistent use and progress tracking, making health more accessible and engaging. By visualizing performance trends and progress over time, users can make informed decisions about their fitness routines and lifestyle habits. This approach promotes a stronger connection between personal data awareness and long-term health outcomes. Data has shown that consistent exercise provides a variety of benefits that include strengthening bones, positive effects on mood, and even helping to prevent chronic illnesses like diabetes and heart disease (Ahmed). Knowing this, the ability to quickly and freely track progress is something that creates benefits, even outside of benching more wight than last month.

In addition to promoting user engagement, the project addresses critical considerations surrounding data privacy and security. Seemingly, these days, data privacy and security are a growing topic. We are seeing more and more mobile devices contracting online viruses from phishing emails or users clicking faulty links. But specifically regarding data privacy, the importance of handling data leaks is emphasized.

The target audience for this project includes individuals who are seeking to learn more about their fitness habits and gain experience with technology-assisted self-improvement before committing to more advanced or intensive programs. By combining accessibility, automation, and educational value, the application serves as a bridge for users looking to develop a deeper understanding of their health through technology. In 2024 alone, the number of data compromises in the United States was 3,158, which is only 1% under the record number of compromises tracked within one calendar year (Identity Theft Resource Center). This emphasizes the importance of local data storage for this project. All the data that a user has within the application is directly stored on the user’s mobile device. This significantly reduces exposure to potential data breaches and ensures that sensitive information remains under the user’s direct control.

Ethical Implications

Several ethical considerations that need to be acknowledged and talked about for this senior comprehensive project. A few things that are important to talk about are data security, bugs and false user recommendations. Addressing these concerns will provide context for the reasoning behind why I created what I did and how.

First, it is important to acknowledge the user of personal data. This is an open mobile application that could receive a large number of users; it is important to note the use of their data. As the developer of this application, I have no access to the information that the users put into their profiles. This data is securely stored within their mobile device and nowhere else. There is no possibility of data sharing within the app, which creates no possibility of a breach of data. I acknowledge that other apps provide users with the ability to share data with friends or communities within the app. While further researching this concept, I decided that it would be a better and safer solution to solely keep the data for the user’s use only. While there are benefits to communities and friends within an app like this to be able to compare data with friends and communities, the idea of users’ data being jeopardized is a much more serious matter to be addressed. This concept plays into the idea of why I am developing my experiment in this specific fashion.

At first, I thought it would be much more beneficial to conduct an experiment utilizing colleagues to gather responses based on their use of the app and how it helped them progress in the gym. While this experimental idea had its benefits, it had many different ethical implications that had to be considered. I decided to avoid these implications by developing the idea of testing the app through AI-generated data. This experiment will provide me with all the information that I desire in a way that does not conflict with any ethical considerations. This brings me to another thought: what if my evaluation could steer a user in the wrong direction?

As this application has an emphasis on feedback to the user to aid them in their gym journey, is it possible that a false recommendation could have implications on their mindset? This is important to note and look further into. Once a user inputs their data, they then receive certain feedback within the evaluation page depending on their data. This feedback may range from letting the user know that they have exercised a muscle group too many times this week to you did twelve reps; maybe try upping the exercise weight to stick to a rep range of six to ten. As this experiment is provided to the user it may provide the user with new knowledge that could change how they approach their workout. It is critical to know that the app is a tool to supplement informed decision-making rather than a substitute for professional advice. By acknowledging the limitations of data-driven models, the application promotes responsible use and encourages users to interpret their feedback as well as combine it with personal judgments.

Even with careful development and testing, the code may contain bugs or unexpected behaviors that could impact the user experience or the accuracy of the data analysis. In the context of a fitness application, such bugs could lead to incorrect feedback, misrepresented progress, or failure to update the user’s personal records. For example, a calculation error could suggest that a user has reached a personal record when they have not, or fail to warn them about overtraining a muscle group. From an ethical perspective, acknowledging and mitigating the risks of bugs is essential. This includes rigorous testing and providing mechanisms for users to report errors or inconsistencies. By planning for potential software errors and maintaining transparency, the application prioritizes user trust, safety, and well-being.

As there are many ethical implications to consider within this application, I have developed this application in a specific fashion to avoid many possible ethical issues. These include local storage of user data to ensure privacy, conducting an experiment that handles AI-generated data instead of dealing with human data, acknowledging the fact that this app may provide a false recommendation due to model limitations, and proactively testing the code to avoid the possibility of bugs arising.

Structure of Senior Comprehensive Project

This project is organized into five chapters. Chapter one introduces the project, including its professional and technical motivation, relevance to STEM disciplines, ethical considerations, and overall structure. Chapter two reviews related works and literature, compares similar applications, and provides the technical context. Chapter three describes the methods used in developing the iOS application, including system design, code architecture, and UI/UX decisions. Chapter four presents experimental results, including testing outcomes, user feedback, data validation, and performance evaluation. Finally, Chapter five discusses the results, highlights potential improvements, explores broader applications, and suggests directions for future research. This structure ensures a clear and logical presentation of the project from conception to evaluation and implications.

Contributions of The Project

This project contributes a custom iOS application that integrates workout tracking, data analysis, and personalized feedback within a unified, simple, user-friendly mobile platform. Unlike other fitness applications, this system is tailored to individual user-inputted data and emphasizes the interpretation of the data rather than just simple data logging and visuals. This application includes six seperate pages that are key in acknowledging history, charts, evaluation, awards, and the user profile view.

This includes the development of a local user login and account creation system and an entirely device-based data storage model built in Swift. This creation demonstrates the importance of local data storage within my application. As discussed before, preventing data leaks is the #1 reason behind the local storage.

The main landing page, once the user has logged in, is the history or workout log page. This page contains all the specific exercises the user has completed. Once a user inputs their data for an exercise, including weight, repetitions, and muscle group, this data will show up within the history page along with a date. The workout log is an essential piece of content to include when creating a fitness app, as it allows users to rewind in time to see their past data. Keeping this page simple is also important because as this page gets more complicated, it may complicate the user’s ability to easily view past workouts.

Once new data is added to the History page, it is automatically reflected in the Charts page. The Charts page continuously monitors for newly recorded data points and updates the corresponding exercise-specific graph whenever a new entry is detected. Each graph visually represents trends over time, allowing users to clearly observe their progression in the gym. These visualizations not only make performance trends easier to interpret but also help users review their previous workouts to determine appropriate starting points for future sessions.

After that, the evaluation page also updates. This will give the user simple and concise feedback to aid them. This will provide them with timely insights into whether they are progressing or regressing, it will provide them with feedback on their training routine, recommending whether they should train a certain muscle group more or less. The importance of short and concise feedback is emphasized as I do not want to overload the user with information.

This then brings us to the awards page. This page is meant to bring gamification to life on my mobile app. The goal of this page is to give the user a reason to keep being consistent and coming back to using this app. A majority of the fitness apps contain some type of gamification as it is a way for users to stay engaged. An example of an award would look like entering a workout for 100 days; an award like this gives users incentive to keep going to the gym and bettering themselves each day.

Finally, this includes the profile page. This is a very simple page including a few key details, including personal records, along with how many workouts the user has inputted. This was a way to let the user know their personal record totals, meaning the total weight of the squat, deadlift, and bench press combined. This is a piece of information that lots of gymgoers like to know as it gives the user another incentive to keep training hard.

These components form the core of my application and reflect months of learning, experimentation, and iteration. I began studying app development—including the Xcode environment and the Swift programming language—in January 2024. Since Swift was a new language for me, I wanted to build a strong foundation in its functions, structures, and syntax before fully committing to development. I approached this by comparing Swift to languages I was already confident in, such as Python, Java, and C. This comparative learning strategy helped me see how the concepts I had mastered in previous coursework translated into Swift, ultimately giving me a more complete and intuitive understanding of iOS development. This learning process also deepened my understanding of development methodology. Most importantly, it taught me the value of continuous testing and troubleshooting—principles that cannot be emphasized enough. Early in development, I also learned the importance of feasibility testing. This involves prototyping and evaluating an idea before fully implementing it. A concept may seem perfectly reasonable when discussed in theory, but its feasibility often becomes much clearer once development begins. Some ideas that sound simple in conversation can become significantly more complex when put into practice. It is crucial to understand what can realistically be built. Once a developer commits to delivering a feature, users expect that promise to be fulfilled. Because of this, knowing the limits, challenges, and feasibility of an idea before promising it is an essential part of responsible development. By integrating feasibility testing early and often, I was able to refine my ideas, avoid unnecessary roadblocks, and move forward with confidence in the parts of the application I chose to implement. This leads to the importance of a thorough experiment.

For my experiment, I plan to utilize AI-generated data. The goal of this experiment is to conduct a thorough experiment that aims to evaluate each part of my application. By doing this, I will gain important knowledge and information that can provide necessary feedback to aid the final developmental sprint.

For this experiment, I will utilize AI-generated data to simulate realistic user interactions and workout patterns within the application. Using AI-generated inputs allows me to test the system under controlled, repeatable conditions without relying on human data at this stage of development. This approach ensures that I can evaluate each feature of the app—including data processing, visualizations, and system responsiveness—using consistent and diverse datasets. AI-generated data also enables me to stress test the application and identify potential issues early. By incorporating this method, I can gather meaningful insights and feedback that will directly inform and improve the final development sprint. While AI-generated data is highly useful for early-stage testing, it is important to acknowledge both its strengths and its limitations. AI data provides clean, structured inputs that allow for controlled experimentation, making it ideal for identifying technical issues, validating core functionality, and ensuring the app behaves as expected across a wide range of scenarios, in this case across different mobile devices. However, AI-generated data cannot perfectly replicate the unpredictability and variability of real human behavior. Because of this, the results of AI-based testing must be viewed as preliminary. Even with these limitations, AI-generated data remains a powerful tool that enables efficient, iterative testing and helps streamline the development process.

This experiment aims to provide me with feedback on graph compression, responsiveness, data processing, and query times. As visuals are a key component of this application, the importance that they are clean and legible cannot be emphasized enough. Some research questions that this aims to answer are as follows:

- How will the graphs look with one year’s worth of user data?

- How will the graphs compress?

- Will the data points be too close together?

These are all essential to the success of my application. Without knowing the answers to all these questions, the app would feel incomplete. Moving forward with this concept involves gaining knowledge of responsiveness. This theme is of number one importance within app development. Developers pride themselves on giving a fun and engaging user experience. If the app takes ten seconds to load a specific page, users will most likely find a different application. Learning about the amount of data it takes to slow down the app or if it even slows down at all is an essential question. Providing this application with years’ worth of data will answer this question. Checking and examining the responsiveness of moving between pages, loading the application, and touch responsiveness will all be of utmost importance to answer. Query times are very similar to this theme. Within the application, there are many different search queries, like personal records, for loops actively looking for a specific experiment, and many more. How will these be affected when they have to look through 1000 or 5000 data points? Being able to provide evidence that this app can run these queries without the time being affected will be a very strong argument and point for my application.

This experiment, in conclusion, is well developed and aims to conduct a study in place for a human study. This AI-generated data allows me to experiment in a controlled environment where the defined variables are a strong point of this experiment.

Method of approach

Overview of WorkoutTracker

WorkoutTracker is a dynamic fitness app designed to help users log, monitor, analyze, and receive evaluations on their workouts in a seamless and visually engaging way. Beyond simply recording exercises, the app provides charts, trend analysis, and personalized evaluations, allowing users to track progress, identify patterns, and receive actionable insights on strength, endurance, and fatigue. Its architecture emphasizes a single source of truth for workout data, ensuring that every logged session automatically updates history views, progress visualizations, and predictive assessments in real time. The intuitive interface and integrated analytics make it a tool not just for tracking workouts, but for optimizing training and staying motivated over the long term.

The purpose of WorkoutTracker is to provide users with free and accessible tools to track and evaluate their workouts, helping them improve over time. While fitness can often feel complicated, this app simplifies progress tracking, emphasizing that real growth comes from commitment, consistency, and determination. Providing the mobile fitness world with this application will finally solve the problem of receiving free and concise feedback and analysis to benefit the user.

WorkoutTracker is designed for fitness enthusiasts of all levels, from beginners who want to establish consistent workout habits to advanced athletes aiming to monitor performance trends and optimize training. Its intuitive interface and automated tracking features make it accessible to anyone, regardless of prior experience with fitness apps. Users can log individual exercises, track weight and repetitions, monitor heart rate, and view historical progress, enabling them to make data-driven decisions about their training routine. Whether the goal is building strength, improving endurance, or maintaining overall fitness, the app supports consistent tracking and evaluation over time.

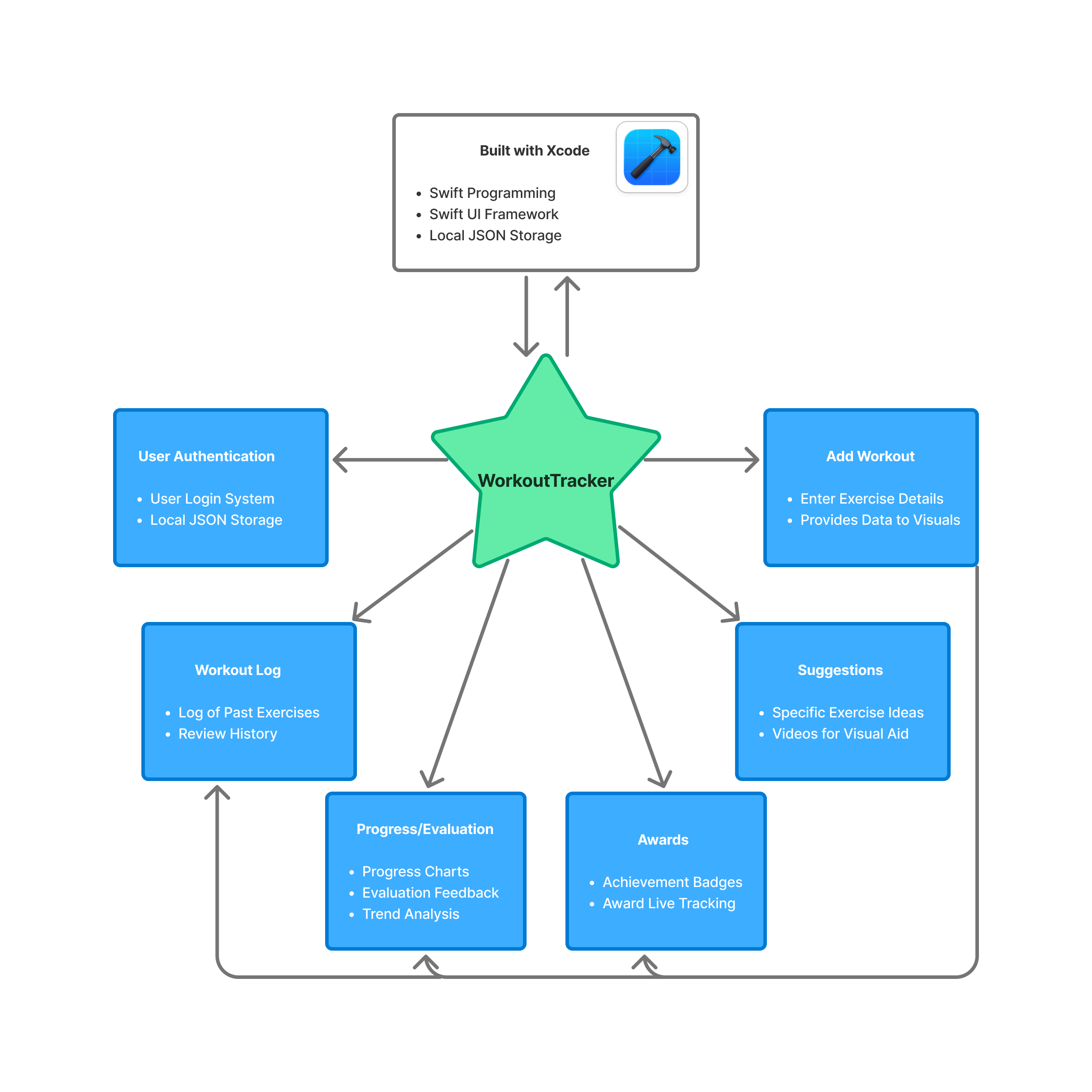

System Architecture

The overall architecture of WorkoutTracker is designed to provide a seamless, reactive experience for users while maintaining a simple design. As seen in the flowchart above, there are many moving pieces within this application that are important to note and explain. Many of these pages communicate together and work together to produce one functioning application. Explaining the Xcode platform and the Swift language will be completed first, after that there are 6 different pages that will be explained: History, Progress, Awards, Suggestions, Add, and Profile.

Xcode

Xcode is Apple’s integrated development environment (IDE) for building applications across its platforms, including iOS, iPadOS, macOS and watchOS. It provides developers with a complete toolkit, including a code editor, interface builder, simulator, debugging tools, and project management, all in one environment. For a mobile app like WorkoutTracker, Xcode allows for rapid development and testing, letting developers design interactive interfaces visually while simultaneously writing and compiling Swift code. It allows users to utilize themselves as a prototype tester for their app by providing the users with 2 choices. Test the app on provided simulators of chosen platform (iOS, watchOS, etc…), or est the app on their own device

While Xcode provides simulators for iOS, watchOS, and other Apple platforms, testing on a live device offers a more accurate understanding of how the app performs in real-world conditions. Simulators are helpful for quickly checking layouts and basic functionality, but they cannot fully replicate device-specific behaviors such as actual touch responsiveness, performance under load, battery usage, or integration with hardware features such as local user creation.

To test WorkoutTracker on a live device, the following steps were taken:

- Device Preparation: The iPhone was connected via USB to the development computer. The device was trusted and registered with Xcode.

- Provisioning and Signing: A free or paid Apple Developer account was used to set up a provisioning profile and code signing for the device.

- Selecting the Device in Xcode: In Xcode, the target device was selected from the run destination menu instead of the simulator.

- Building and Running: The app was built and deployed directly to the device using Xcode’s Run command.

- Live Testing: The app was interacted with on the device to verify UI responsiveness, chart updates, data entry, and performance in real usage scenarios.

By completing these steps, this allowed my mobile phone to have a running version of my current computational artifact during development. Which enabled testing of many aspects of the app that were previously mentioned. There are many additional key features of Xcode that are worth hightlighting.

Xcode is very tightly intergrated with swift, Apple’s primary programming language. It provides realt-time syntax highlighting, error detection, and compile-time checks, allowing developers to identify and correct issues early in the development process. This tight integration improves code reliability and reduces debugging time.

Xcode includes advanced debugging tools such as breakpoints, variable inspection, and console logging. Additionally, Instruments allows developers to profile CPU usage, memory consumption, and energy impact. These tools are especially important for a fitness app like WorkoutTracker, where performance efficiency and battery usage are critical during prolonged use.

Xcode’s Interface Builder and SwiftUI Previews allow developers to visually design user interfaces and immediately see changes reflected in real time. This significantly speeds up UI iteration and ensures layouts adapt properly across different screen sizes and device types.

Xcode has built-in Git support, allowing developers to track changes, manage branches, and revert to previous versions directly within the IDE. This is essential for maintaining code stability as features are added or modified throughout development. Haing built-in GIT support was crucial for this project. It allows continuous work saving within a repository that can be tracked and viewed by my colleagues and professors.

Xcode manages the full application lifecycle—from development and testing to archiving and preparing the app for distribution via TestFlight or the App Store. This makes it a centralized tool not only for development but also for deployment and maintenance.

Xcode provides detailed build logs and warnings that help developers understand compilation errors and runtime issues. This feedback is crucial for debugging logic errors and ensuring the app meets Apple’s platform requirements. Overall, Xcode serves as a comprehensive development environment that supports the entire workflow of the WorkoutTracker app, from initial design and coding to real-device testing, performance optimization, and eventual deployment.

User Authentication

When the user opens the application, they are automatically brought to the login page. When the user downloads and opens the app for the first time, they will need to press a button to be brought to the user creation page. Within the user creation page, they are prompted to create a username , then create a password that is required to be typed in 2 times for confirmation. To keep this application safe and secure, the username and password are both stored locally on the user’s device. By developing the app in this fashion, it creates the best chance to keep secure data, so only the user will be able to access the account.

Once the user creates this account, they will be brought back to the login page and will now log in with the same username and password that they just created. The information they type into the username and password fields is then checked against what is stored locally within the device. If and only if the information provided matches the stored information correctly, the user will then be allowed into the application. If the username or password is incorrect, an error message will be displayed, telling the user that the information does not match what the app has locally.

This user-authentication system allows this application to keep pushing a safe and secure build, while also making sure that the person signing into the application is the right user.

Add Workout

The page where the user enters all of their workout data is the engine of this whole application. Without the user entering any data, nothing will happen within this application. Once the user enters data, such as repetitions, weight, exercise, and muscle group, this powers all the other parts of this application. As discussed in the upcoming section, the data input by the user then goes to multiple different pages to display different data or evaluations.

Within the add workout page, there are four fields that the user is required to enter. These fields are repetitions, weight, exercise, and muscle group. Once the user has entered all of the following information, they are able to press the save button, which kickstarts the data flow within the system. Each of these fields has a unique purpose within the application. Repetitions and weight solve the same problem of workout tracking over time. If the user consistently enters data of the same workout over time, they are easily able to receive feedback, visualize progression or regression, and track personal records. When the user tells the application what exercise they have completed, it will allow the application to recognize where this data should go. If the user enters a bench press session, the backend of the application is able to recognize the name of the exercise and place the data with all of the other bench press entries. By entering the muscle group, the application is able to more accurately calculate the fatigue of a muscle group, and also is able to group together entries of a specific muscle group.

Workout Log

The Workout Log is the centralized history of all workouts the user has recorded. Its primary purpose is to give users a clear, organized view of their past training sessions, allowing them to track consistency, review performance, and monitor progress over time. Each entry represents data the user manually input such as exercise name, weight, reps, and date. This is displayed in a structured list format for easy scanning.

Beyond simply viewing entries, the log also allows users to manage their data. Users can delete individual entries, giving them control to remove mistakes, outdated records, or duplicate workouts. This keeps the dataset accurate and relevant, which is important because other parts of the app — such as progress tracking, award calculations, and performance evaluations — rely on this stored workout data. In this way, the Workout Log acts as both a historical record and the core data source that powers the app’s analytics and achievement systems.

Progress / Evaluation

The progress and evaluation page analyzes the user’s recorded workouts and transforms raw data into meaningful performance insights. Rather than simply listing exercises, this section identifies trends such as personal records, strength improvements, workout frequency, and consistency over time. Its purpose is to help users clearly see whether they are progressing toward their fitness goals and to provide measurable feedback that reinforces motivation. By evaluating stored workout entries, it turns past activity into actionable performance information.

The evaluation portion is the center of attention within this application because it provides a level of personalized performance feedback that is not commonly found in basic workout tracking apps. While many fitness apps allow users to log exercises and view past entries, they often stop at simple data storage. This application goes further by actively interpreting that data to generate meaningful insights, such as tracking personal records, identifying strength trends, and highlighting consistency patterns. Instead of forcing users to manually analyze their own progress, the evaluation system transforms raw workout entries into clear, actionable feedback.

What makes this feature especially valuable is its ability to connect effort with measurable results. By continuously analyzing logged workouts, the evaluation system gives users a deeper understanding of how their training habits impact their performance over time. This creates a more engaging and motivating experience, as users are not just recording workouts—they are receiving structured feedback that reinforces improvement and goal progression. In this way, the evaluation component becomes the defining feature of the application, elevating it beyond a simple workout log and into a performance-driven fitness tool.

Profile

The profile page serves as the user’s personal account center within the app. It displays information tied to the authenticated user, such as their username, and may include a summary of their activity, including total workouts or number of different exercises. Its purpose is to provide a centralized location for identity, personalization, and account-related controls, reinforcing a sense of ownership over the user’s data and progress.

The purpose of this page is to keep certain peices of data in one page for the user to see. There is an importance in fitness applications for personal record tracking, so displaying these records to the user as well as the total weight is something specifically catered towards the fitness community.

Awards

The Awards page is designed to motivate users by recognizing milestones and accomplishments achieved through consistent training. Awards may be earned for completing a certain number of workouts, maintaining streaks, or reaching performance benchmarks such as personal records. Its purpose is to gamify the fitness experience, making progress feel rewarding and encouraging users to remain consistent with their routines.

This page relies on workout data stored in the Workout Log and analyzed in the Progress section to determine eligibility for achievements. When users add or remove workout entries, award qualifications may update accordingly. By connecting effort to recognition, the Awards page reinforces positive habits and enhances long-term engagement with the app.

Gameification is an important peice of fitness applications. By adding awards and accomplishments, it provides the user with a sense of motivation as well as engagement. Keeping a user in the app for a long period of time will first help them progress in the gym, and two make the app’s data more accurate because of the longer period of time. So creating something to engage the userbase was done through the awards section.

Suggestions

The suggestions page functions as an educational and guidance tool within the app. It provides users with recommended exercises organized by muscle group, helping them structure workouts and explore new movements. Each suggestion may include helpful tips or instructional resources to ensure proper form and safe execution. Its primary purpose is to support users in building effective and well-rounded training routines.

While this page does not store or analyze user data directly, it complements the workout log by influencing what users choose to record. By offering structured guidance and variety, the suggestions page enhances the overall training experience and helps users make informed decisions about their workouts. This allows beginners in the gym to choose from a suggested list of exercises to get started in their gym journey.

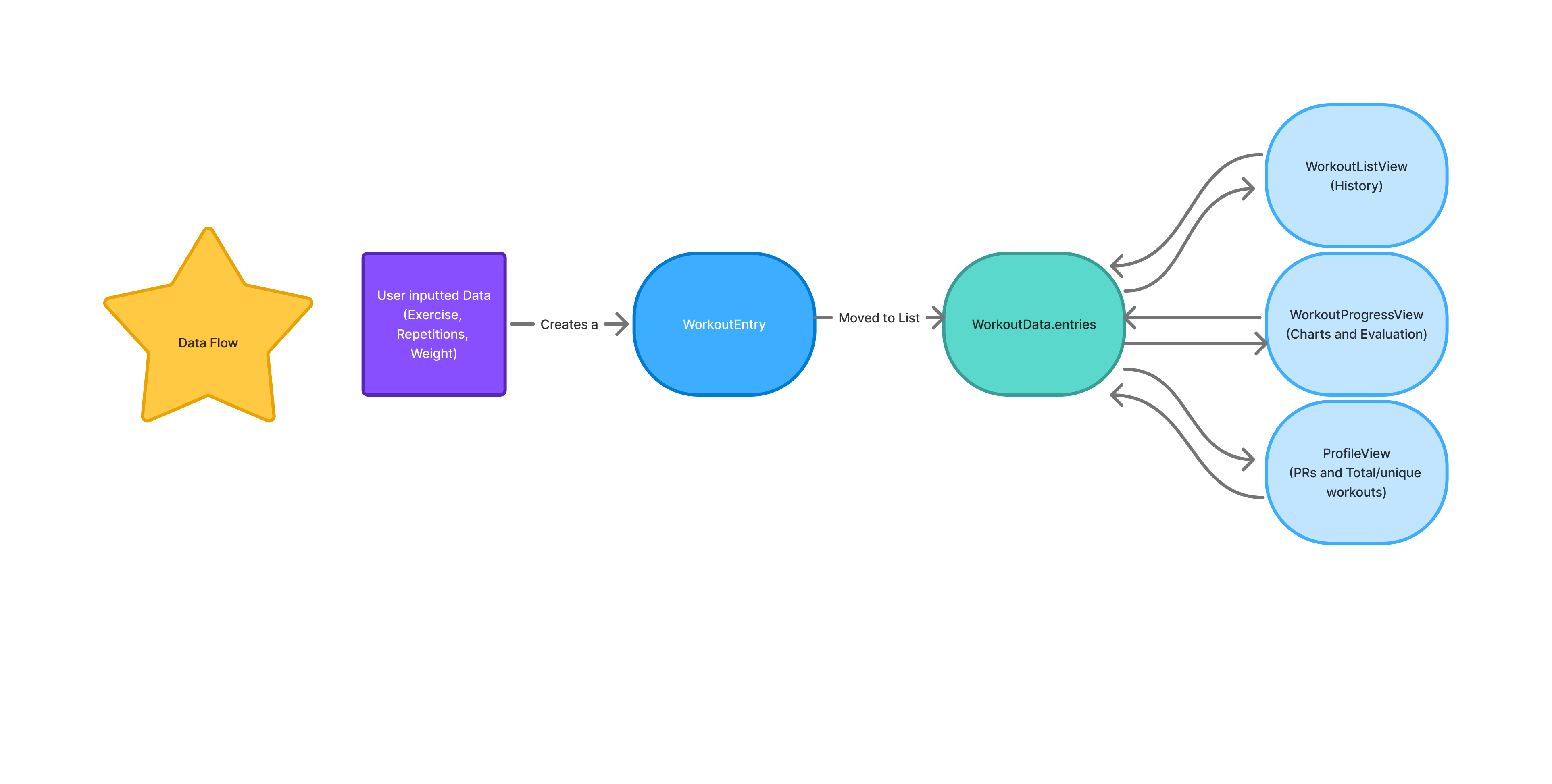

Data Flow and Processing Pipeline

This section introduces the data flow and processing pipeline used in the WorkoutTracker application. It explains how user-entered workout data moves through the system, from initial input to final output, and how each stage contributes to the app’s overall functionality. Rather than focusing solely on individual code segments, this section emphasizes the logical flow of data and the design decisions behind it, supported by a flowchart and selected code examples. This approach provides a clear understanding of how raw workout data is transformed into meaningful metrics, summaries, and visualizations presented to the user.

Let’s start with how the data gets inputted from the user. As seen in the flowchart, the user-inputted data starts all the following processes. In WorkoutTracker, data querying does not run continuously. This means that everything becomes powered and runs when the user presses the save button which finalizes the data they are inputting. This folows what is called an event-driven model, where queries are triggered only when specific events occur, in this case being when the user logs a workout. Other cases within this app are when the user navigates to the progress page to view visualizations. When no new data is being entered or requested, the querying logic becomes idle, consuming no processing resources. This design was carefully developed to ensure efficient resource usage, avoiding unnecessary computation, and improves battery performance which is critical when working in app development. This also aligns with modern mobile app development standards, where data processing is reactive rather than continuously running.

1 let entry = WorkoutEntry(

2 date: Date(),

3 muscleGroup: cleanMuscleGroup,

4 exercise: cleanExercise,

5 weight: Double(weight) ?? 0,

6 reps: Int(reps) ?? 0,

7 heartRate: Double(heartRate)

8 )This code structures every data input that app recieves from the user. As you can see in the flowchart, once the user adds a workout, it creates a “WorkoutEntry”. This function processes every input that is recieved and structures them the same way every time. This allows for easy coding when it comes to looping through the data to find specific inputs. Each input is made up of six parts with one of them being optional, date, muscle group, exercise, weight, reps, and the optional choice to input heart-rate. Every time an input is recieved, it is then transformed into this structure and then finally appended to list “Data.entries”.

This list as seen in the flowchart is the color teal. This list contains all of the data inputs that the user has ever provided the app. So as the user continues to add hundreds of inputs the list continues to get larger. WorkoutData.entries is an in-memory data structure rather than a JSON object. It is implemented as a Swift array containing strongly typed workout entry models, such as structs that represent individual workout records. This array exists only while the application is running and is used by the app’s logic and user interface to query, process, and display workout information efficiently. JSON is involved only when this data needs to be persisted or loaded; in that case, the Swift objects stored in WorkoutData.entries are serialized into a JSON file for local storage and later deserialized back into Swift objects when the app is relaunched. This separation between runtime data structures and storage format ensures type safety, efficient processing, and clean state management within the application.

By appending each incoming input to the same list in the same structure, it enables much easier coding when it comes to PR querying, or data visualization. It only requires a for each loop to then parse through the list and check for any specific detail the developer wants. For example if the developer was looking to collect all of the exercises that are working out a specific muscle group it would look like this.

1 workoutData.entries.forEach { entry in

2 if entry.muscleGroup == targetMuscle {

3 matchingExercises.append(entry)

4 }

5 }Within this code snippet, you can see that it is parsing through every entry within the list and looking for the targetMuscle, which would have been initialized before the loop. If the targetMuscle was declared as “legs,” then the matchingExercises list would solely contain all the exercises that have muscleGroup as legs. Swift makes for each loop very simple by only requiring three things for this specific example. First, what are you parsing through in this case, specifically, the name of the list. Secondly, you need to specify what you want the for each loop to do once it gets to a data input, in this case, looking for a specific muscle group, and then finally appending the data only if it matches the desired muscle group.

Moving forward in the flow chart, we can see that this list WorkoutData.entries, fuels the rest of the processes. There are three different pages that take data from this list: History, Progress (charts and evaluation), and Profile. All of these different sections of WorkoutTracker actively us different queries to update data.

WorkoutListView (history) is the page where the user is able to view a log of all the workouts they have entered into the app. Within this page users have the ability to delete specific entries if they mis-entered information or wish to remove an entry. All that this page is doing is displaying the list of entries in a neat and easily readable output. There are two important code parts that go into this page.

1 var body: some View {

2 NavigationView {

3 ZStack {

4 // Full screen gradient

5 AppColors.gradient

6 .ignoresSafeArea()

7 List {

8 ForEach(workoutData.entries) { entry in

9 WorkoutCard(entry: entry)

10 .listRowBackground(Color.clear)

11 .listRowSeparator(.hidden)

12 }

13 .onDelete(perform: workoutData.delete)

14 }

15 .listStyle(PlainListStyle())

16 }

17 .navigationTitle("Workout History")

18 .navigationBarTitleDisplayMode(.inline)

19 }

20 }This SwiftUI code defines the user interface for a “Workout History” screen. The main view is a NavigationView containing a ZStack, which allows layering of elements. At the back of the stack, a full-screen gradient defined by AppColors.gradient is displayed and extended to cover the safe area using .ignoresSafeArea(). On top of this gradient, a List presents all workout entries stored in workoutData.entries using ForEach, where each entry is represented by a custom WorkoutCard view. Each list row is customized to have a transparent background (.listRowBackground(Color.clear)) and no default separator (.listRFowSeparator(.hidden)), giving it a clean, card-like appearance. Users can also delete entries with swipe actions via .onDelete(perform:). The list uses PlainListStyle() to remove extra padding and the default list styling, and the navigation bar is set with the title “Workout History,” displayed inline for a compact appearance.

This view shows a user-friendly “Workout History” screen where each workout is displayed as a card. The colorful gradient in the background makes the screen visually appealing, while the list of workouts scrolls on top of it. Each card is easy to read because the rows are transparent and have no separators, giving a clean, modern look. Users can also swipe to delete workouts, and the navigation bar at the top clearly shows the title of the screen. Overall, this layout combines style and functionality in a simple, organized way.

1 VStack(alignment: .leading, spacing: 6) {

2 HStack {

3 Text(entry.exercise)

4 .font(.headline)

5 .foregroundColor(.white)

6 Spacer()

7 Text("\(Int(entry.weight)) lbs x \(entry.reps)")

8 .font(.subheadline)

9 .foregroundColor(.white.opacity(0.9))

10 }

11

12 HStack(spacing: 12) {

13 if let hr = entry.heartRate {

14 Label("\(Int(hr)) bpm", systemImage: "heart.fill")

15 .font(.caption)

16 .foregroundColor(hr > 140 ? .red : .green)

17 }

18

19 Label(entry.date.formatted(.dateTime.month().day().year()),

20 systemImage: "calendar")

21 .font(.caption)

22 .foregroundColor(.white.opacity(0.8))

23 }

24 }This SwiftUI code defines the layout of a single workout entry in a vertical stack (VStack) with a small spacing of 6 points between its elements, aligned to the leading edge. The first horizontal stack (HStack) displays the exercise name on the left in a bold headline font with white text, and on the right, it shows the weight lifted and the number of repetitions in a slightly smaller, semi-transparent white font. A Spacer() between them pushes the two pieces of text to opposite sides. Below that, a second HStack with a spacing of 12 points shows additional details: if the workout entry includes a heart rate, it displays it with a heart icon in a caption font, coloring it red if the rate is above 140 bpm and green otherwise. Next to that, a calendar icon with the formatted workout date is displayed, also in a caption font and semi-transparent white. Overall, this layout creates a clean, organized card showing both the main workout information and supplemental details like heart rate and date.

The first code snippet defines the overall screen layout, using a NavigationView with a scrollable List that displays all workout entries, while the second snippet defines what each individual entry looks like inside that list as a WorkoutCard. Essentially, the List in the first snippet loops over all workoutData.entries and places a WorkoutCard for each one, creating a full workout history view. The WorkoutCard layout, shown in the second snippet, organizes the exercise name, weight, reps, heart rate, and date in a clean, readable format using vertical and horizontal stacks. Together, the two snippets work in tandem: the first handles the overall structure, scrolling, and navigation, and the second handles the content and styling of each row, resulting in a cohesive, visually appealing workout history screen.

Moving forward from the history page, the ProgressView file is really what this moile application is specifically focused on. This page produces the evaluation and visuals for the user to read. All of the algorithms within this file all start when a user enters in data. This is important to once again note that when the user enters in data it is exactly like a car starting its engine. It provides power to the screen display, activates the underlying logic of the program, and triggers the sequence of operations that follow. Without that initial input, the system remains idle, waiting for instruction. Once the data is entered, the algorithms process it step by step, transforming raw input into meaningful output that is then presented back to the user. In this way, user input serves as the driving force that moves the entire program forward.

func entries(for exercise: String) -> [WorkoutEntry] {

workoutData.entries

.filter { $0.exercise == exercise }

.sorted { $0.date < $1.date }

}Regarding the visual portion, the most important thing was to create a visual for each separate exercise that the user enters. When the user navigates to the progress tab, they are prompted to first select the exercise that they would like to view the visual and evaluation for. Once the user selects this exercise, for example, bench press, the app will then select from the list of workoutData.entries all the pieces of data that are entered for that selected exercise. As you can see, within this code, the program utilizes a simple but efficient for loop. This for loop will parse through the list of entries and select only the ones for the specified exercise. This allows the program to effectively display all of the data for the specified exercise.

In other functions, the progress tab will also retrieve information such as weight, repetitions, and date for the specific data piece to add it to the graph. The graph powers the user with the ability to visualize trends within their exercise history and receive feedback on why that might be happening. The data is then also used by the evaluation portion of this file. Many different algorithms are used to calculate trends within the user’s data, provide feedback on whether their muscles may be fatigued, and much more essential knowledge. The program completes this by initializing multiple variables up front, like momentum and latestEntry that are then used to calculate different feedback, like fatigue and volume. These variables are all grabbing the data from the list of workout entries, so once new data is entered, these variables may change to stay up to date with the data.

The last page that gets powered by the user-inputted data is the profile page. The profile page is something important to include when creating a mobile fitness application. It allows the user to easily discover, in this fitness app specifically, personal records, the number of workouts, and cumulative personal record data. This page uses the data in a very simple way, designed specifically to be important but simple.

let squatPR = workoutData.entries

.filter { $0.exercise.lowercased().contains("squat") }

.map { $0.weight }

.max() ?? 0.0This code calculates a user’s squat personal record (PR) by analyzing their stored workout data. It begins by accessing the entries array inside workoutData, which likely contains all logged workouts. It then filters that list to include only exercises whose names contain the word “squat,” converting each exercise name to lowercase to ensure the search is case-insensitive. After narrowing the list to only squat-related movements, the code maps those entries to extract just the weight values. From that list of weights, it uses .max() to determine the highest value, which represents the user’s heaviest recorded squat. If no squat entries are found and .max() returns nil, the nil-coalescing operator (??) ensures the result defaults to 0.0. Overall, this line of code efficiently finds the maximum squat weight the user has logged, providing their squat PR.

A significant portion of this project and application focuses on data and data analysis. By breaking this application down piece by piece we are able to see how the user-inputted data is the center of attention in this application.

Algorithms and Analytical Methods

The trend analysis and evaluation system is the most analytically sophisticated component of WorkoutTracker, and represents the feature that most clearly differentiates this application from a basic workout logging tool. Implemented within WorkoutProgressView.swift, this system transforms a user’s raw workout history into a structured, session-by-session performance assessment. The following subsections explain the logic, design decisions, and justifications behind each analytical layer.

Exercise Filtering and Chronological Sorting

Before any trend analysis can occur, the application must isolate the relevant subset of data for the exercise the user has selected. This is handled by two complementary functions. The entries function, a for loop, filters WorkoutData.entries to include only records matching the selected exercise name, then sorts them in ascending chronological order. This sorted sequence becomes the data source for the progress chart, ensuring that the visualization accurately reflects the progression of the user’s training. A companion function, history which is also a for loop, performs the same filtering but sorts in descending order, returning the most recent session first. This ordering is intentional meaning the evaluation logic always begins from the latest entry and works backwards, making the most recent session the reference point against which all prior sessions are compared.

The choice to maintain two separately ordered views of the same data, rather than sorting on the fly within each function, reflects a deliberate design decision to keep the chart logic and the evaluation logic independent of one another. The chart needs ascending order to plot points left to right over time and the evaluator needs descending order to prioritize recency. Separating these concerns makes both functions simpler and less error-prone.

Session-to-Session Comparison

The core of the evaluation logic begins with a direct comparison between the latest session and the one immediately preceding it. The function retrieves the two most recent entries from the descending history and compares weight and repetitions side by side. This produces the opening line of the evaluation feedback, which is dynamically selected based on the outcome of that comparison. If both weight and reps increased, the feedback reflects progressive overload which is the gold standard of strength training. If only weight increased, the feedback identifies strength development. If only reps increased, endurance improvement is noted. If neither increased, the system identifies the session as a maintenance effort and recommends a focus on technique.

This comparison-first approach is justified because session-to-session comparison is the most immediately meaningful signal to a fitness user. It directly answers the question every athlete asks after a workout: did I do better than last time? By leading with this signal and framing it in motivational language, the evaluation delivers actionable context before introducing any more complex metrics.

Volume Calculation

Beyond raw weight and reps, the system calculates training volume for each session as the product of weight multiplied by repetitions. Volume is a well-established metric in exercise science for quantifying total mechanical work performed in a session. Comparing the current session’s volume against the previous session’s volume gives a more complete view of performance change than weight or reps alone meaning a user who lifts slightly less weight but performs significantly more repetitions may actually have produced greater training stimulus, and volume captures this.

The volume comparison result feeds directly into the evaluation text, informing the user whether their overall training load increased or decreased relative to their last session, and by how much. This was an important metric to include as volume is an important aspect of any weightlifting session.

Momentum Calculation

One of the more analytically complex components of the evaluation system is the momentum metric. Rather than comparing only the two most recent sessions, momentum is calculated by comparing the average volume across the five most recent sessions against the average volume of the five sessions before that. This produces a measure of performance trajectory that is more valuable for the user than a comparison between the last 2 workouts.

The momentum value is expressed as a percentage change between the two rolling windows. A value above 0.15 indicates rapidly accelerating performance, a value between 0.05 and 0.15 indicates steady positive progress, and a value below -0.10 signals a meaningful decline that may indicate fatigue or insufficient recovery. This tiered interpretation ensures that the feedback adapts to the magnitude of the trend rather than applying binary labels to continuous data.

Using a rolling window approach rather than a cumulative average is justified by the recency principle in sports science that recent training sessions are more predictive of current fitness state than the entirety of training history, which may include periods of deconditioning, injury, or significant load changes.

Predictive Next Session Estimation

The evaluation system also generates a prediction for the user’s next session using a simple linear regression over the five most recent entries. The slope of the weight trend and the slope of the reps trend are each calculated independently and applied to the most recent session’s values to project what the following session might look like.

The implementation computes the slope, and applies it over an indexed sequence of sessions rather than raw dates, which avoids potential problems caused by irregular training intervals. The predicted weight is then rounded to the nearest five-pound increment which is a practical decision reflecting the standard plate increments available in most gym settings. Predicted reps are rounded to the nearest whole number. Minimum floors of five pounds and one repetition are enforced to prevent the model from generating nonsensical outputs during periods of decline.

This prediction is intentionally modest in scope. It does not attempt to model long-term periodization or account for biological variability. Instead, it provides a single concrete data point which is a suggested target for the next session that is built in recent trend data and presented as a guide. For a user-facing fitness application, a simple, interpretable prediction is more valuable than a complex model whose outputs cannot be easily explained or verified by the user.

Fatigue and Recovery Index

The final analytical layer in the evaluation system is a fatigue index, calculated by dividing the user’s total training volume over the past seven days by their recent average session volume, then scaling the result to a 0-to-100 range. A score below 40 indicates a manageable training load with adequate recovery capacity; scores between 40 and 70 suggest elevated load that warrants attention; scores above 70 signal potential overtraining and recommend rest.

The fatigue index is important because volume and momentum metrics alone cannot distinguish between productive high-frequency training and accumulated fatigue. Two users might show identical momentum scores, but one may have achieved that momentum with three sessions per week while the other trained seven days consecutively. The fatigue index surfaces this distinction, providing context that the other metrics do not capture.

By evaluating these metrics and providing the user with this information, WorkoutTracker is able to complete a task that no other mobile fitness applications have been able to complete. Providing careful justification within this section is important to understand why and how many of these metrics are being completed, as well as to demonstrate the uniqueness of this project.

The code for this experiment was developed and is published in a public repository at the following link: https://github.com/EvanNelson04/WorkoutTrackerExperiment.

Experiments

Experimental Setup

This experiment is tailored specifically to the technical components and development environment used in the WorkoutTracker application. Since the project was designed, implemented, and tested entirely within Xcode using the simulator, the experimental setup focuses on the conditions required to reproduce the app’s behavior and performance measurements. In order to make the results clear and repeatable, this section describes the hardware and software environment, the method used to generate the datasets for testing, and the benchmark methodology used to evaluate system performance. Together, these elements define the foundation of the experimental process and ensure that the reported results can be understood, replicated, and fairly interpreted. The benchmark implementation used for these experiments is also available in a separate public repository to support transparency and reproducibility: [https://github.com/EvanNelson04/WorkoutTrackerExperiment]. This repository contains the experimental benchmarking code used to generate the performance results discussed in this chapter.

Hardware and Software Environment

The experiments for this project were conducted on Apple hardware using Xcode and the iPhone Simulator to ensure a consistent and reproducible testing environment. The hardware platform used was an Apple MacBook Air equipped with an Apple M4 chip and 16 GB of RAM. This machine provided sufficient processing power and memory to build, run, and evaluate the application without hardware-related limitations affecting the benchmark results. Rather than deploying to a physical iPhone for the reported experiments, the application was executed through the iPhone Simulator included with Xcode, allowing controlled and repeatable testing conditions across multiple runs.

On the software side, the development environment consisted of macOS as the host operating system, Xcode as the integrated development environment, Swift as the programming language, and the iOS SDK version bundled with the installed Xcode release. Unless otherwise stated, the application was compiled and tested using the Debug build configuration, since this was the configuration used during development and simulator-based experimental runs. Recording these hardware and software details is important so that another reader or developer can recreate the same setup and reproduce the experimental procedure under comparable conditions.

Dataset Generation

It is important to talk about this data generation as it is the central point of the experiment. This data needed to be created and generated in the same format as the data the users will enter into the app to simulate real data. By simulating this real data, it will make this experiment as close to what a user’s real app will look like. Being able to simulate the look of real data for this experiment is important for strengthening my argument and experiment. This synthetic data generation process ensures that the benchmark results reflect realistic usage conditions rather than an artificial test environment.

static let exercises = [

"Bench Press", "Squat", "Deadlift", "Overhead Press",

"Pull Up", "Barbell Row", "Leg Press", "Incline Bench",

"Dumbbell Curl", "Tricep Pushdown", "Lat Pulldown", "Cable Fly"

]

static let muscleGroups = ["Chest", "Back", "Legs", "Arms",

"Shoulders", "Core"]This is where the data generation starts. The data generation was designed to be simple and efficient. As you can see in the code above, there is a set list of exercises that the data gets generated from, as well as a set list of muscle groups. When the data is being generated the loop will cycle through this list of exercises, generating piece by piece in a fashion that equally generates data for each set exercise. This is also most accurate to what a real user’s data might look like. Ideally data should be spread out evenly over different exercises using different muscle groups. Initializing a set list of exercises makes the data generation easier, as it is just assigning a exercise to the piece of data.

Next, it is important to display what this data looks like in the code. Like noted before, the data is built to the same format as the real data within the app. Like previously mentioned, this will best mirror a user’s real data.

entries.append(WorkoutEntry(

date: date,

muscleGroup: muscleGroup,

exercise: exercise,

weight: weight,

reps: Int.random(in: 3...12)

))This code above is the format for every piece of generated data that this experiment uses. It outlines and uses the same parameters that the real user data utilizes. Within this code segment you can see that it generates different parameters such as date, muscleGroup, exercise, weight, and reps. These are all of the important things that the app needs to produce a full and accurate evaluation so the user can progress over their time of using the app. By making the data all in the same format, it makes the generation process super simple and can be done in only a few steps.

Benchmark Methodology

The benchmark methodology begins by generating synthetic workout data and appending it to the dataset list used by the application. This process fills the app with realistic test data before any measurements are taken, ensuring that the benchmark reflects how the app performs when it is already populated with entries similar to those a real user would create. For this experiment, the dataset size was set to 100, 1000, and 10000 entries so that performance could be observed under a controlled and repeatable condition.

Performance was measured in milliseconds to provide precise timing results for each tested operation. Each benchmark test was executed 5 times, and the final reported values were calculated as the average of those 5 runs. Averaging the results helped reduce the effect of temporary system variation and produced a more reliable representation of the app’s typical performance. As learned in many classes including data analysis, taking the average of 5 or more runs creates a more accurate depiction of the performance. When an experiment is only run once, this contains the possibility of having outliers in your data. By running the benchmarking experiment multiple times, it allows the times to be much more accurate.